Multimodal AI: Building Products That See, Hear, and Reason

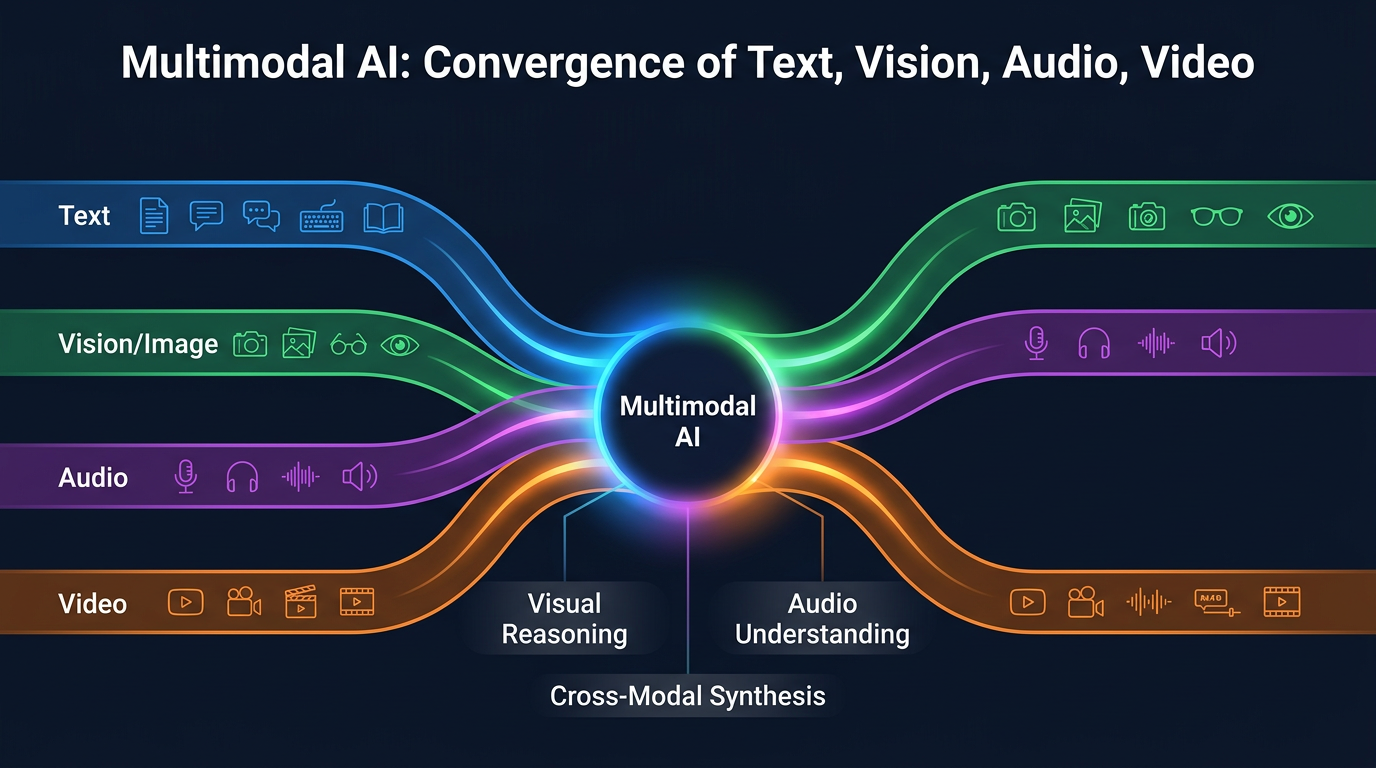

For most of the LLM era, AI products have been text-in, text-out. You type a question, the model types an answer. This was always a limitation of the technology, not of the ambition. The real world isn't text — it's images, sounds, video, sensor data, and spatial information, all arriving simultaneously and all needing to be understood in relation to each other. In 2026, frontier models don't just process text. They see screenshots, interpret diagrams, understand speech with emotion and nuance, analyze video frame by frame, and reason across all these modalities simultaneously. This changes what products can do, and it changes what products should be.

The Multimodal Landscape in 2026

Let's survey what's actually possible today:

Vision Understanding

Vision capabilities have progressed from "can identify objects in photos" to "can reason about complex visual information." Google's Gemini 3.1 can analyze architectural blueprints and identify structural concerns. Microsoft's Phi-4-reasoning-vision can solve multi-step math problems from handwritten whiteboard photos. Claude Sonnet 4.6 can review UI mockups and identify accessibility issues, inconsistent spacing, and UX anti-patterns.

The frontier isn't object recognition — it's visual reasoning. Can the model look at a manufacturing line photo and identify the equipment that's out of spec? Can it analyze a satellite image and estimate crop yield? Can it review a medical scan and flag anomalies that warrant specialist review? Increasingly, yes.

Audio Understanding

Audio went from "speech-to-text transcription" to "full audio understanding." GPT-5.4 and Gemini 3.1 can process raw audio and understand not just the words but the tone, emotion, pace, and context. They can identify multiple speakers, detect sarcasm, and understand that a customer saying "That's great" in a flat tone probably isn't happy.

This enables products like real-time meeting analysis that doesn't just transcribe but understands dynamics: "The engineering lead expressed strong concerns about the timeline in minutes 12-15, and the PM's response didn't address the specific technical blockers raised." That's not transcription — that's comprehension.

Video Understanding

Video is the most compute-intensive modality, but capability is advancing rapidly. Gemini 3.1 Pro can process hours of video and answer questions about specific moments, track objects and people across scenes, and summarize narrative arcs. This opens up applications in security (anomaly detection in surveillance footage), education (analyzing lecture engagement), sports (tactical analysis from game film), and quality control (identifying defects on a production line from camera feeds).

| Model | Text | Image | Audio | Video |

|---|---|---|---|---|

| GPT-4o | Excellent | Excellent | Good | Limited |

| Claude 3.5 | Excellent | Good | No | No |

| Gemini 1.5 | Excellent | Excellent | Good | Good |

| Llama 3.2 | Good | Basic | No | No |

Cross-Modal Reasoning

The real power isn't in any single modality — it's in reasoning across modalities simultaneously. "Here's a photo of my kitchen, a video of how I usually cook pasta, and a voice note about my dietary restrictions. What should I make for dinner tonight, and walk me through the recipe?" This query requires vision (understanding the kitchen and available ingredients), audio (interpreting the voice note), video (learning cooking preferences), and text (generating the recipe) — all integrated into a coherent response.

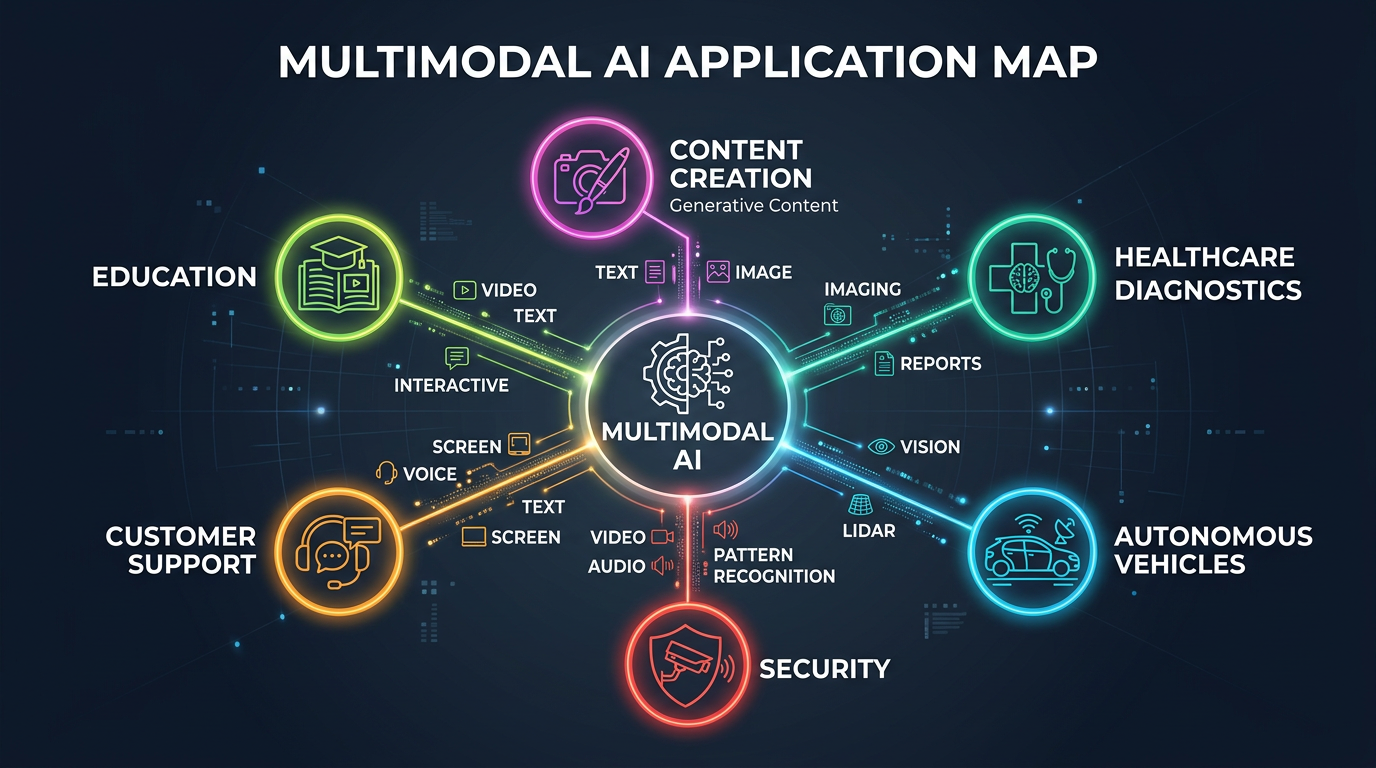

Product Categories That Multimodal Enables

Here's where I think the product opportunities are most compelling:

1. Visual Inspection and Quality Control

Manufacturing quality control has traditionally required either expensive specialized computer vision systems or human inspectors. Multimodal AI models can be deployed with a commodity camera and a prompt: "Flag any PCB that has solder bridges, misaligned components, or missing parts." The model doesn't need thousands of labeled defect images — it can reason from the description and reference images. This makes visual QC accessible to small and medium manufacturers who couldn't justify custom CV systems.

2. Multimodal Documentation

Imagine pointing your phone at a piece of equipment, saying "how do I replace the filter on this?" and getting step-by-step instructions that reference the specific model you're looking at, with visual annotations overlaid on the camera feed. This combines vision (identifying the equipment), language (retrieving and generating instructions), and spatial reasoning (indicating where to find the filter housing). Field service applications, consumer appliance support, and technical education are all obvious use cases.

3. Accessibility as a First-Class Feature

Multimodal AI makes real-time accessibility dramatically more powerful. A visually impaired user can point their phone at a restaurant menu and hear it read aloud with context — "The salmon is $24 and is marked as the chef's special." They can navigate unfamiliar buildings with a camera feed that identifies doors, stairs, and signage. They can participate in whiteboard sessions where the AI describes the diagram being drawn in real time. These aren't new ideas, but multimodal models make them practical and natural in a way that previous technology couldn't.

4. Healthcare Triage

A patient takes a photo of a skin condition, describes the symptoms verbally, and uploads their medication list. The multimodal system analyzes all three inputs and provides a risk assessment: "This appears consistent with contact dermatitis. Given your current medication list, topical corticosteroids would be appropriate, but check with your dermatologist given the interaction risk with your immunosuppressant." This isn't replacing doctors — it's making triage faster and more informed.

5. Creative Workflows

Product designers can sketch on a whiteboard, take a photo, and get a high-fidelity UI mockup generated from the sketch. Architects can voice-narrate design changes while pointing at a blueprint. Musicians can hum a melody and get a full arrangement with notation. The common thread: multimodal AI makes the interface between human creativity and digital tools more natural. The best ideas often come when you're sketching on a napkin, not when you're wrestling with Figma's pen tool.

Design Considerations for Multimodal Products

Building multimodal products is different from building text-based AI products. Here are the design principles that matter:

Let the User Choose the Input Modality

Don't force users to type when they could speak, or speak when they could point. The best multimodal interfaces let users combine modalities naturally: "Make this [points at element] look like this [shows reference image] but in blue." The UI should accept any combination of text, voice, image, and gesture without requiring the user to think about which mode they're in.

Show Your Work Across Modalities

When a model reasons across modalities, show the user what it saw. If it's analyzing an image, highlight the regions it focused on. If it's processing audio, show the transcript with timestamps. If it's watching video, reference specific frames. Transparency builds trust, and multimodal systems are complex enough that users need to verify the model understood the right things.

Graceful Degradation

Not every device has a camera. Not every environment allows voice input. Multimodal products must degrade gracefully when modalities are unavailable. If the user is on a desktop without a camera, the image-input feature should offer file upload as an alternative, not just disappear. If they're in a quiet library, voice input should fall back to text.

Latency Budgets by Modality

Users have different latency expectations for different modalities. Text completion should feel instant (<500ms). Image analysis can take 2-3 seconds without feeling slow. Video processing can take 30+ seconds and still feel acceptable if there's a progress indicator. Design your UX around these different tolerance levels rather than trying to make everything equally fast.

The Phi-4-Reasoning-Vision Breakthrough

Microsoft's Phi-4-reasoning-vision deserves special attention because it demonstrates that multimodal reasoning doesn't require a 500B-parameter model. At roughly 14B parameters, Phi-4 achieves competitive performance on visual reasoning benchmarks by specializing: instead of being a general-purpose model that also does vision, it's a reasoning-first model that uses vision as an input. This matters because it means multimodal AI is increasingly deployable on-device — on phones, in cameras, on edge hardware — without cloud round-trips. On-device multimodal AI enables real-time applications (industrial inspection, AR, accessibility) where cloud latency is unacceptable.

Modality-Appropriate Input

Let users provide input in the most natural format. Screenshot > description, voice > typing for some tasks.

Graceful Degradation

Always have a text fallback. Not all users can or want to use camera/microphone. Accessibility first.

Cross-Modal Verification

Use one modality to verify another. OCR + language understanding catches errors neither would alone.

Privacy by Design

Images and audio carry more sensitive data than text. Process locally when possible, minimize retention.

The Hard Problems

Let's be clear about what remains hard:

- Hallucination in visual reasoning: Models sometimes "see" things that aren't there, or misinterpret spatial relationships. A model might confidently describe a product as "red" when it's clearly orange. For safety-critical applications, visual hallucination is a serious concern.

- Audio in noisy environments: Current models handle clean audio well but struggle with heavy background noise, multiple simultaneous speakers, or strong accents. Real-world audio is messy.

- Video at scale: Processing long-form video (hours) is still computationally expensive and slow. Most applications need to pre-select relevant segments rather than feeding entire videos to the model.

- Evaluation: Measuring multimodal model quality is harder than measuring text quality. There's no equivalent of "perplexity" for a model's ability to understand a diagram or detect sarcasm in speech.

Conclusion

The text-only era of AI products is ending. The models that power the next generation of applications will see, hear, and reason — simultaneously. For product builders, this means thinking beyond chatbots and text interfaces. The most impactful products will be the ones that meet users where they are — sketching on a whiteboard, talking in a meeting, pointing at a broken machine — and understand them naturally, without forcing them to translate their intent into text first.

We spent two decades making humans adapt to computers. Multimodal AI finally makes computers adapt to humans.