The Intelligence Premium Is Eroding: What It Means for Knowledge Workers

For the last three hundred years, the global economy has operated on a simple assumption: human cognitive ability is scarce, and scarcity commands a premium. Lawyers, doctors, engineers, analysts, and strategists earned outsized wages because their ability to process information, reason through complexity, and generate novel solutions was in limited supply. That assumption is breaking. Not slowly — rapidly. And the consequences for knowledge workers, organizational design, and economic structure will be more profound than anything we've seen since the Industrial Revolution displaced artisan labor.

The Historical Intelligence Premium

Let's ground this in economics. The "intelligence premium" — the wage differential between cognitive and non-cognitive labor — has been the dominant driver of income inequality in developed economies since the mid-20th century.

In 1980, a college graduate in the United States earned about 40% more than a high school graduate. By 2020, that gap had widened to nearly 80%. Economists Claudia Goldin and Lawrence Katz documented this extensively in The Race Between Education and Technology: as technology advanced, it increased the demand for cognitive skills faster than the education system could supply them. The result was a bidding war for "smart" people.

This dynamic created the knowledge economy as we know it: consulting firms billing $500/hour for analysts' thinking, law firms charging $1,000/hour for partners' judgment, and software engineers commanding $300K+ total compensation for their ability to translate complex problems into working code.

The premium wasn't for the output. It was for the cognition required to produce it.

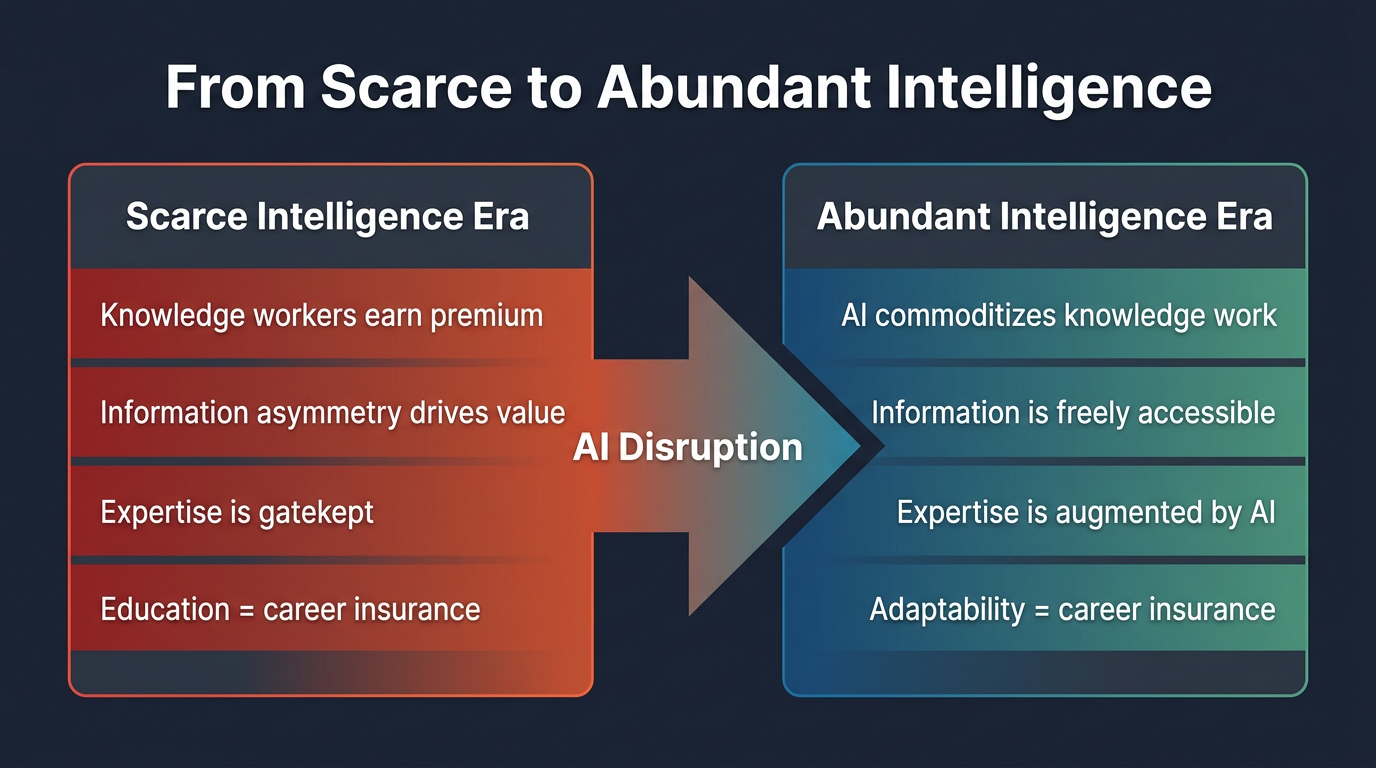

What Changes When Intelligence Becomes Abundant

The insight from Citrini Research's provocative "2028 Global Intelligence Crisis" thought experiment is worth wrestling with: what happens when the supply of cognitive capability — analysis, synthesis, reasoning, pattern recognition — increases by orders of magnitude almost overnight?

Classical economics gives us a clear answer: when supply of any input increases dramatically while demand grows more slowly, the price of that input falls. If AI can perform the cognitive tasks that knowledge workers are paid for at 1% of the cost and 100x the speed, the premium paid for human cognition must compress.

This doesn't mean knowledge workers become worthless. It means the nature of the premium shifts. Let's trace the implications:

Implication 1: The "First Draft" Layer Gets Commoditized

A huge portion of knowledge work is "first draft" work — the initial analysis, the rough financial model, the first pass of legal research, the preliminary architecture diagram, the draft marketing copy. This work is essential but not where the deepest human judgment lies.

AI excels at first drafts. It can generate a 90th-percentile first pass of a market analysis in seconds. The McKinsey associate who spent 60 hours building a competitive landscape from scratch is now competing with a tool that does it in 60 seconds.

This doesn't eliminate the associate's role — someone still needs to validate, contextualize, and present the analysis. But it changes the economics. If the first draft took 60 hours and now takes 0, the remaining 20 hours of refinement and judgment are all that's left to bill for. The total value of the engagement drops.

Implication 2: The Premium Shifts from "Knowing" to "Judging"

When information retrieval and analysis are commoditized, the scarce human input becomes judgment — the ability to make decisions under uncertainty, to weigh competing stakeholder interests, to navigate political dynamics, to exercise taste.

This is a fundamental shift. The education system, the hiring process, and the promotion ladder in most organizations are optimized to identify and reward "knowing" — GPA, test scores, technical knowledge, domain expertise. They are not optimized to identify "judgment" — wisdom, emotional intelligence, ethical reasoning, strategic intuition.

"The paradox of abundant intelligence: the more AI can think for us, the more valuable it becomes to think differently from AI."

Implication 3: Organizational Pyramids Flatten

The traditional knowledge-work organization is a pyramid. At the bottom: junior analysts and associates doing grunt work (data gathering, modeling, drafting). In the middle: managers reviewing, refining, and coordinating. At the top: senior leaders making decisions and owning client relationships.

AI eliminates much of the bottom layer and compresses the middle. If a senior partner can use AI to do the work that previously required three associates, the organization needs fewer associates. The pyramid becomes a diamond — or, more provocatively, an hourglass.

This has already begun. Law firms report using AI tools to reduce associate hours on document review by 60-80%. Consulting firms are using AI to generate first-draft deliverables that previously required teams of analysts. Financial institutions are automating equity research that junior analysts used to spend weeks producing.

Implication 4: The Entry-Level Bottleneck

Here's the dark side that few are discussing openly: if organizations need fewer junior knowledge workers, how do people develop the judgment and experience needed for senior roles?

The junior associate role wasn't just about producing output — it was a training ground. You learned to be a great lawyer by doing thousands of hours of legal research. You learned to be a great consultant by building hundreds of models. You learned to be a great engineer by writing mountains of code.

If AI handles the tasks that constituted the apprenticeship, the development pipeline breaks. We risk creating a generation of senior leaders who never went through the cognitive equivalent of boot camp. This is perhaps the most important structural challenge of the AI transition, and it has no obvious solution yet.

What Skills Command the New Premium?

If raw cognitive processing is no longer scarce, what is? I see four categories of human capability that will command increasing premiums:

1. Problem Framing

AI is extraordinarily good at solving well-defined problems. It's terrible at deciding which problems are worth solving. The ability to look at a messy, ambiguous situation and frame it as a crisp, solvable problem is deeply human and increasingly valuable. The best product managers, executives, and strategists are fundamentally problem framers.

2. Stakeholder Navigation

Organizations are political systems. Decisions aren't made by analysis alone — they're made through persuasion, negotiation, coalition-building, and trust. AI can produce the perfect analysis, but it can't convince the VP of Sales to change their compensation structure, or navigate the board dynamics around a risky acquisition. Human political intelligence remains irreplaceable.

3. Taste and Curation

When AI can generate infinite content, code, designs, and strategies, the scarce skill becomes selecting the right one. Taste — the ability to distinguish "good enough" from "excellent," to know which trade-offs matter and which don't — is a form of pattern recognition that current AI lacks. It requires lived experience, cultural context, and aesthetic judgment that can't be trained from data alone.

4. Accountability and Trust

Someone has to sign off. Someone has to be responsible when things go wrong. Someone has to look the client or the regulator in the eye. AI can advise, but it cannot be held accountable. The premium for human accountability — for putting your reputation and career on the line behind a decision — will increase as more of the analytical work is delegated to machines.

| Skill | AI Capability | Human Edge | Value Trajectory |

|---|---|---|---|

| Data analysis | Strong | Interpretation, context | ↓ Declining |

| Content writing | Strong | Voice, originality | ↓ Declining |

| Strategic thinking | Emerging | Judgment, risk tolerance | ↑ Appreciating |

| Relationship building | Weak | Trust, empathy | ↑ Appreciating |

| Creative direction | Emerging | Taste, cultural context | ↑ Appreciating |

The Transition Period: What to Do Now

We're in the early innings of this shift. The intelligence premium hasn't collapsed — yet. But the rate of AI capability improvement suggests that many cognitive tasks currently commanding high wages will be substantially automatable within 3-5 years. Here's how to position yourself:

- Invest in judgment, not just knowledge. Seek roles that require decisions under uncertainty, stakeholder management, and cross-functional coordination. These are harder to automate than analytical tasks.

- Become AI-augmented, not AI-resistant. The knowledge workers who thrive will be those who use AI to amplify their judgment, not those who compete with AI on cognitive processing speed. A lawyer who uses AI to do research in 10 minutes and spends 50 minutes on strategy beats a lawyer who does 60 minutes of research manually.

- Build a reputation for judgment. When AI can produce the analysis, the value shifts to the person whose name on the analysis signals quality and trustworthiness. Personal brand and track record become more important, not less.

- Diversify your cognitive portfolio. Specialists whose entire value is deep knowledge in a narrow domain are most at risk. Generalists who can connect dots across domains, manage ambiguity, and adapt to new contexts are better positioned.

Conclusion: The End of Cognitive Scarcity

The intelligence premium isn't going to zero. But it's being redefined. The premium will increasingly accrue to those who can do what AI cannot: frame problems worth solving, navigate human complexity, exercise taste and judgment, and bear accountability for outcomes. The transition will be disorienting, especially for those whose identity is tied to being "the smartest person in the room." In a world of abundant intelligence, being smart is table stakes. The question becomes: what do you do with that intelligence that a machine cannot?

References & Further Reading

- Acemoglu — The Simple Macroeconomics of AI (MIT, 2024)

- NBER — GPTs are GPTs: An Early Look at the Labor Market Impact Potential of LLMs

- Citrini Research — Intelligence Abundance and the 2028 Thought Experiment

- Goldin & Katz — The Race Between Education and Technology (Princeton)

- McKinsey Global Institute — Generative AI and the Future of Work in America

- Brookings Institution — What Jobs Are Affected by AI?