AI Literacy Is the New Computer Literacy: A Non-Technical Person's Learning Path

In 1995, "computer literacy" meant knowing how to use a mouse, save a file, and send an email. By 2005, it meant navigating the internet, using productivity software, and managing digital files. Today, it means nothing — because everyone has it. AI literacy is following the same trajectory, just 10x faster. In three years, not understanding how AI works will be as professionally limiting as not knowing how to use email was in 2010. The good news: you don't need a CS degree. You need a structured learning path and about 90 days of focused effort.

Why This Matters Now

Coursera reported a 234% increase in GenAI course enrollments in 2024, and that growth accelerated through 2025. OpenAI launched its Academy specifically for non-technical learners. Google, Microsoft, and Amazon all now offer AI literacy certifications aimed at business professionals. The market is screaming a message: AI literacy is table stakes.

But most people are learning wrong. They're either going too deep too fast (trying to understand transformer architectures when they should be learning prompting) or staying too shallow (watching YouTube explainers without ever building anything). What follows is the path I recommend to the product managers, marketers, operations leaders, and executives I advise.

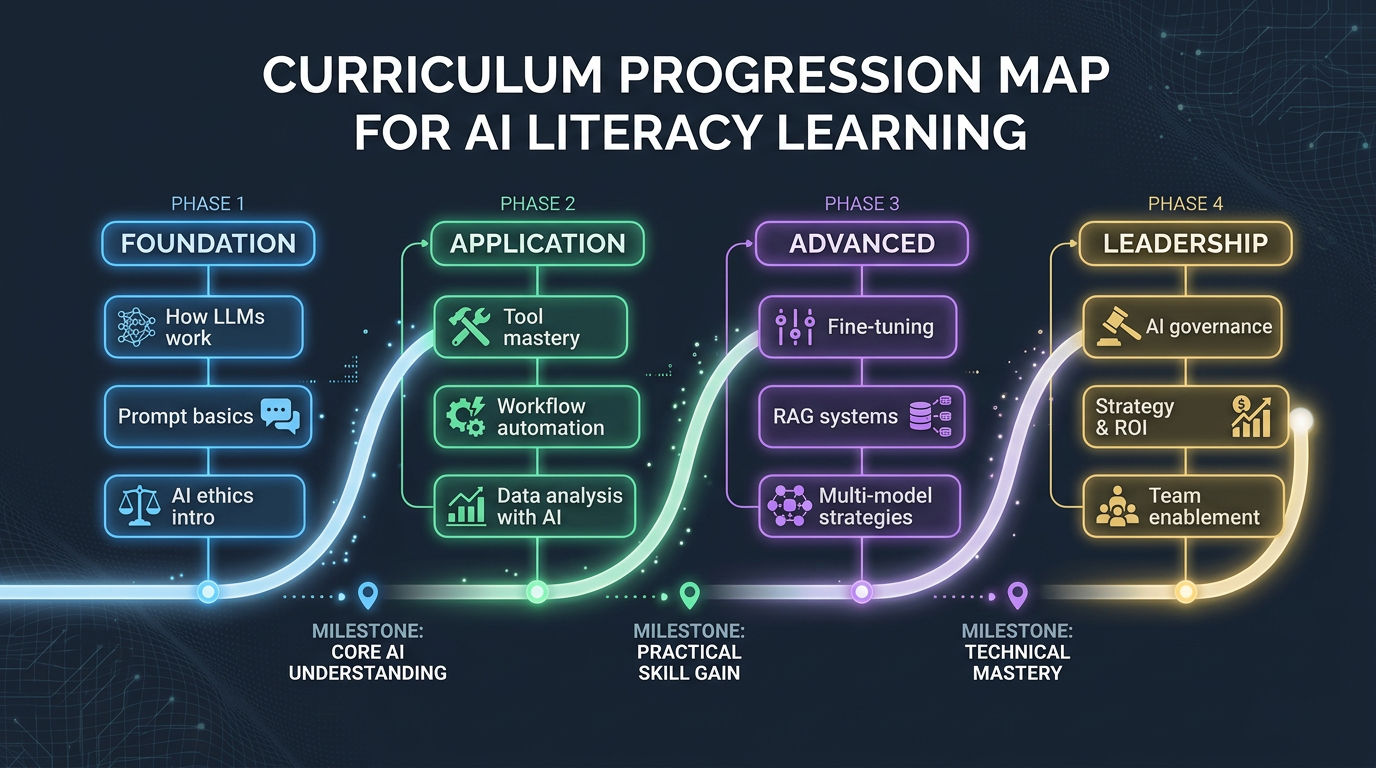

Level 1: Conceptual Foundation (Weeks 1-3)

The goal here isn't to understand the math. It's to build accurate mental models of what AI systems actually do — and, critically, what they don't do.

What You Need to Understand

- LLMs are sophisticated pattern matchers, not reasoners. They predict the next token based on statistical patterns in training data. When they appear to "reason," they're pattern-matching against reasoning-like sequences in their training corpus. This distinction matters because it explains both their impressive capabilities and their surprising failures.

- Training vs. inference. Training is the (expensive, one-time) process of building the model. Inference is the (cheap, per-query) process of using it. Understanding this explains why GPT-4 costs what it costs and why fine-tuning exists.

- Tokens, context windows, and memory. AI doesn't "remember" your conversation the way a human does. It processes a fixed window of text. When the window fills, old context drops off. This is why long conversations degrade and why RAG (Retrieval-Augmented Generation) exists.

- Hallucination is a feature, not a bug. LLMs generate plausible continuations of text. Sometimes plausible and true overlap. Sometimes they don't. The model doesn't "know" it's wrong because it doesn't have a concept of knowing. Expect this. Verify always.

Recommended Resources

- OpenAI Academy — Start here. Free, well-structured, designed for non-technical professionals.

- Coursera — Generative AI for Everyone (Andrew Ng) — The best non-technical course available. Ng explains complex concepts with exceptional clarity.

- Financial Times — Generative AI Explainer — Excellent visual explanation of how LLMs work.

The Test

By end of Week 3, you should be able to explain to a colleague — in plain language — why ChatGPT sometimes makes things up, why it can't access the internet (unless given tools), and why "prompt engineering" works. If you can do that, you have a solid conceptual foundation.

Level 2: Practical Fluency (Weeks 4-8)

Now you learn by doing. The goal is to become genuinely proficient at using AI tools for your actual work — not toy examples, but real deliverables.

Prompting as a Skill

Good prompting is not about magic words. It's about clear communication — the same skill that makes you good at writing briefs, specifications, and emails. The principles:

- Be specific about the output format. "Summarize this in 3 bullet points, each under 20 words" beats "summarize this."

- Provide context and constraints. "You are a financial analyst writing for a CFO audience. Use conservative assumptions." This is role-setting, and it works because it activates different pattern-matching regions of the model's training data.

- Use few-shot examples. Show the model what good output looks like. "Here's an example of the tone I want: [example]. Now write one for [topic]."

- Iterate, don't start over. Treat it like a conversation. "That's close, but make the tone more formal and add specific metrics" is more effective than re-prompting from scratch.

Tool Proficiency Tiers

Don't try to learn everything. Focus on the AI tools that directly intersect with your job function:

- Tier 1 (Everyone): ChatGPT/Claude for drafting, analysis, and brainstorming. Learn to use them as thought partners, not just answer machines.

- Tier 2 (Your Function): If you're in marketing, learn AI copywriting tools and image generation. If you're in operations, learn AI data analysis. If you're in product, learn AI prototyping tools.

- Tier 3 (Emerging): AI agents, custom GPTs, and workflow automation. This is where the real productivity multipliers live, but you need Tiers 1 and 2 first.

The Test

By Week 8, you should have completed at least 5 real work deliverables where AI meaningfully accelerated your output. Not "I asked ChatGPT to write my email" — something more like "I used Claude to analyze 50 customer interviews and identify the top 3 unmet needs, then validated its analysis against my own reading."

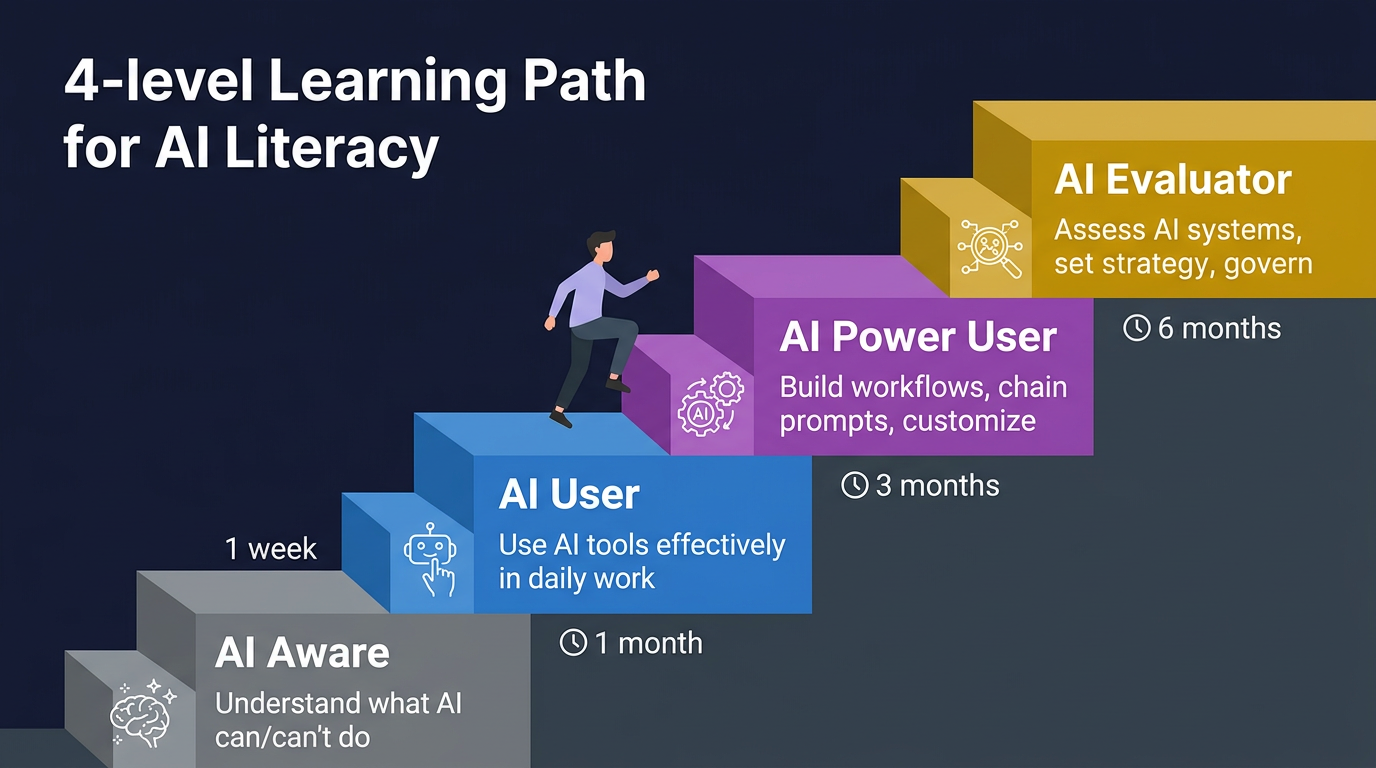

| Level | Profile | Can Do | Time Investment |

|---|---|---|---|

| AI Aware | Anyone curious | Explain AI capabilities & limits | 1 week |

| AI User | Daily tool user | Use AI tools effectively at work | 1 month |

| AI Power User | Workflow builder | Build custom prompts & automations | 3 months |

| AI Evaluator | Strategic decision-maker | Assess AI systems, set policy | 6 months |

Level 3: Critical Evaluation (Weeks 9-12)

This is where most AI education programs stop — and where the real value begins. You need to be able to evaluate AI products, vendors, and claims with a critical eye.

The BS Detection Framework

When a vendor tells you their product is "AI-powered," ask these questions:

- What's the model? Is it a fine-tuned LLM, a custom ML model, or a rules engine with an "AI" label? The answer determines the product's actual capabilities and limitations.

- What's the training data? Models are only as good as their data. If a vendor can't explain their data pipeline, that's a red flag.

- What's the accuracy, and how is it measured? "95% accurate" means nothing without knowing the benchmark, the failure modes, and whether 95% is actually sufficient for your use case.

- What happens when it's wrong? The best AI products have graceful degradation — human review loops, confidence scores, escalation paths. The worst just serve wrong answers with high confidence.

- What's the lock-in? Does the product improve with your data in ways that make switching costly? Is your data being used to train models that benefit competitors?

Understanding AI Economics

You need a working understanding of AI costs to make good business decisions:

- Inference costs are dropping 10x per year. What's expensive today will be cheap tomorrow. Factor this into ROI calculations.

- Custom fine-tuning vs. prompting vs. RAG. Know the trade-offs: prompting is cheapest and most flexible, RAG adds your data without retraining, fine-tuning is most powerful but most expensive and least flexible.

- The "wrapper" problem. Many AI startups are thin wrappers around OpenAI's API. They add value through UX and workflow integration, but their moat is shallow. Evaluate accordingly.

The Test

By Week 12, you should be able to sit in a meeting where an AI vendor is pitching their product and ask questions that make the sales engineer sweat. You should be able to read an AI product announcement and quickly assess whether the claimed capabilities are plausible. You should be able to estimate, roughly, whether an AI solution makes economic sense for a given problem.

Level 4: Strategic Application (Ongoing)

This is where AI literacy becomes AI leadership. You can now identify opportunities to apply AI strategically — not just "use ChatGPT more" but "redesign this entire workflow around AI capabilities."

At this level, you're asking questions like:

- Which of our processes have high-volume, repetitive decision-making that AI could handle?

- Where are our employees spending time on tasks that AI does at 80% quality — and is 80% good enough?

- What new products or services become possible when AI reduces the cost of [X] by 100x?

- How should our hiring strategy change if AI augments the productivity of senior employees more than junior ones?

This level can't be reached through courses alone. It requires combining your AI literacy with deep domain expertise. The CMO who understands both marketing strategy and AI capabilities will outperform the AI expert who knows nothing about marketing and the marketer who knows nothing about AI.

Level 1: AI Aware (1 week)

Watch 3Blue1Brown's neural network series. Read "What Is ChatGPT Doing?" Try 10 ChatGPT prompts.

Level 2: AI User (1 month)

Use AI daily for 30 days. Master prompt patterns. Try 3 different tools (ChatGPT, Claude, Gemini).

Level 3: Power User (3 months)

Build a custom GPT. Create a prompt library. Automate one workflow end-to-end with AI.

Level 4: Evaluator (6 months)

Run an AI pilot project. Create evaluation criteria. Present AI strategy to leadership.

The 90-Day Commitment

Here's my ask: commit 90 days. One hour per day on weekdays, two hours on one weekend day. That's roughly 75 hours of focused learning and practice. At the end, you won't be an AI engineer. But you'll be AI-literate — capable of using AI tools effectively, evaluating AI products critically, and identifying strategic AI opportunities in your domain.

That's not a nice-to-have in 2026. That's career insurance.