Agentic AI in 2026: From Copilots to Autonomous Digital Workers

In 2024, we had copilots — AI assistants that helped you write code, draft emails, and summarize documents. In 2025, we got agents — AI systems that could use tools, browse the web, and execute multi-step plans. In 2026, we're witnessing the emergence of something qualitatively different: autonomous digital workers that can own entire workflows end-to-end, recover from errors, and improve over time. The gap between the hype and reality has narrowed dramatically, but it hasn't closed. Here's an honest assessment of where we are.

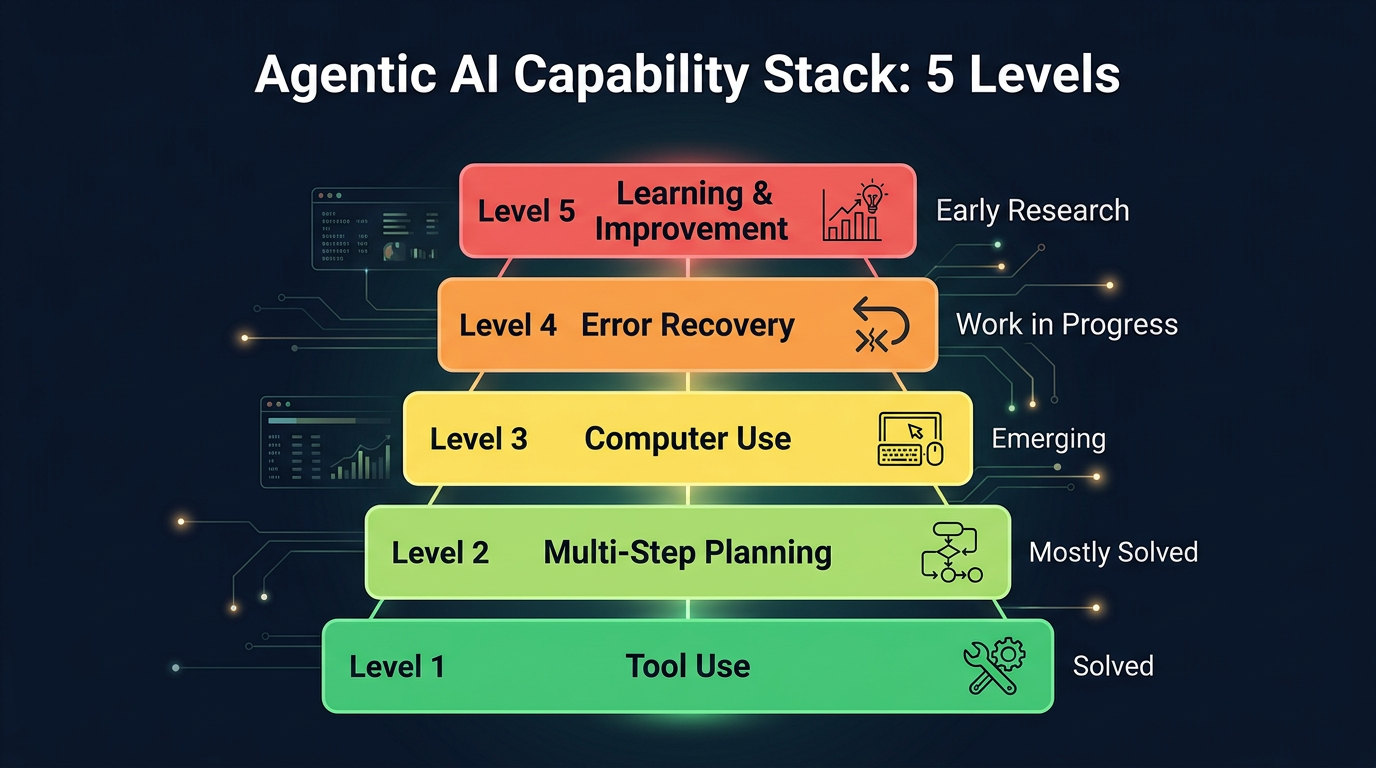

The Agent Capability Stack

To understand where agents are today, it helps to think about capabilities as a stack, from basic to advanced:

Level 1: Tool Use (Solved)

The most basic agentic capability is calling external tools — executing code, querying databases, calling APIs. This is a solved problem. GPT-5.4, Claude Sonnet 4.6, and even open-source models like Llama 4 can reliably select and call the right tool from a set of dozens. Function calling accuracy on standard benchmarks exceeds 95% for frontier models. The key insight from the last two years: tool use works best when tools have clear, well-documented interfaces. The model doesn't struggle with "should I call the search API or the database API?" It struggles when the tool's behavior is ambiguous or its error modes are undocumented.

Level 2: Multi-Step Planning (Mostly Solved)

Can the agent break a complex task into steps and execute them sequentially? For well-defined tasks — "find the top 10 customers by revenue, pull their support tickets from the last 30 days, and generate a summary report" — yes, reliably. The planning capability of frontier models has improved dramatically. GPT-5.4 can maintain coherent plans across 20-30 steps with tool calls, which covers the vast majority of business automation tasks.

Where it breaks down: tasks with ambiguous success criteria ("make this presentation better") or tasks that require understanding organizational context that isn't in the prompt ("file this expense report according to our company's policy").

Level 3: Computer Use (Emerging)

This is the breakthrough capability of 2025-2026. GPT-5.4's computer use mode and Claude's computer use API allow agents to interact with any software through its GUI — clicking buttons, filling forms, reading screens. This is transformational because it means agents can automate workflows in legacy enterprise software that has no API. Your 15-year-old ERP system with no REST endpoint? An agent can navigate its UI.

The current state is genuinely impressive but not yet reliable enough for unsupervised production use. On the OSWorld benchmark (real operating system tasks), the best agents solve roughly 40-50% of tasks end-to-end, up from 12% in early 2025. For specific, well-defined GUI workflows — filling out a form in Salesforce, creating a ticket in Jira, downloading a report from a dashboard — success rates are much higher, often above 85%.

Level 4: Error Recovery and Self-Correction (Work in Progress)

The difference between a demo agent and a production agent is error handling. Demo agents follow the happy path and break spectacularly when something unexpected happens. Production agents need to recognize when something has gone wrong, diagnose the failure, and either retry with a different approach or escalate to a human.

This is the hardest unsolved problem in agentic AI. Current agents can handle anticipated errors (API returns 404, retry with different parameters). They struggle with unanticipated errors (the website redesigned its login page, the database schema changed, the email bounced because the recipient's inbox is full). Progress is being made — chain-of-thought reasoning and self-reflection patterns help — but we're not at the point where you can deploy an agent and forget about it.

Level 5: Learning and Improvement (Early Research)

Can agents learn from their mistakes and improve over time without retraining? This is the frontier. Some approaches show promise — tool-use feedback loops, trajectory optimization, and experience replay — but no production system has demonstrated robust, autonomous improvement. This is the gap between a "digital worker" and a "digital employee."

| Capability | 2024 State | 2026 State | Maturity |

|---|---|---|---|

| Tool Use | Basic API calls | Complex multi-tool orchestration | Mature |

| Planning | Simple sequences | Multi-step with replanning | Emerging |

| Computer Use | Experimental | Browser and desktop automation | Emerging |

| Error Recovery | Retry loops | Adaptive strategies, fallbacks | Emerging |

| Learning | None | In-context examples, memory | Early |

Where Agents Deliver Value Today

Despite the limitations, there are domains where AI agents are already delivering measurable business value in production:

Software Engineering

AI coding agents are the most mature agent category. Tools like Cursor, Claude Code, and Codex's agent mode can now handle multi-file refactoring, write and run tests, fix CI failures, and implement features from natural language specs. For experienced developers, these agents function as a 10x force multiplier — not replacing the developer, but handling the mechanical parts of implementation so the developer can focus on architecture and design.

The numbers are striking: teams using agentic coding tools report 30-60% reductions in time-to-merge for standard feature work. For bug fixes with clear reproduction steps, agents can autonomously diagnose, fix, and submit PRs that pass CI in roughly 40% of cases.

Customer Support

AI agents handling Tier 1 support have gone from "experimental" to "expected." The best implementations (Intercom's Fin, Zendesk's AI agents) resolve 50-70% of inbound tickets without human involvement. The key is that support is a domain with clear success criteria (did the customer's issue get resolved?), bounded action spaces (look up order, issue refund, reset password), and abundant training data (years of support transcripts).

Data Analysis and Reporting

Agents that can query databases, generate visualizations, and write narrative summaries are delivering real value in business intelligence. The workflow: "What were our top performing campaigns last quarter, and why?" The agent queries the data warehouse, runs statistical analysis, identifies the outliers, and generates a Slack-ready summary. This used to take an analyst 4-6 hours. The agent does it in 2 minutes.

Back-Office Automation

Invoice processing, expense reconciliation, compliance checks, vendor onboarding — these repetitive, rule-heavy processes are ideal for computer-use agents. The ROI is clear because the alternative is human labor doing repetitive work that is both boring and error-prone.

Where Agents Are Still Hype

Let's be equally honest about where the promise exceeds the reality:

- Fully autonomous SDRs and sales agents: Despite massive investment, autonomous AI sales agents still underperform human SDRs on conversion rates for complex B2B sales. They work for simple, transactional sales motions but struggle with relationship building and objection handling.

- "Just tell the AI what to build" product management: The dream of describing a product in natural language and having an agent build it end-to-end is still a dream. Agents can implement well-specified features, but product management — understanding user needs, making trade-offs, navigating organizational priorities — remains deeply human.

- Autonomous scientific research: Despite impressive benchmarks on academic tasks, AI agents are not yet independently conducting novel research. They're powerful research assistants, but the creative leaps and experimental intuition that define scientific breakthroughs remain human.

The Trust Architecture Problem

The biggest barrier to agent adoption isn't capability — it's trust. Specifically, organizations need answers to three questions:

- What can the agent do? Clear capability boundaries. Can it send emails? Transfer money? Delete data?

- What should the agent do? Policy enforcement. Even if it can approve a $50K purchase order, should it do so without human review?

- What did the agent do? Audit trails. When something goes wrong — and it will — can we trace exactly what the agent did and why?

The companies winning in the agent space are the ones solving trust, not just capability. Anthropic's approach — Constitutional AI, detailed tool-use policies, human-in-the-loop checkpoints — is a good example. The model can take autonomous actions within a defined sandbox and escalates to humans for anything outside the boundary.

The "Centaur" Model: Human + Agent Teams

The most productive deployments of AI agents in 2026 are not fully autonomous — they're "centaur" teams where humans and agents collaborate with clear division of labor. The agent handles volume and velocity: processing 1,000 support tickets, reviewing 200 code PRs, analyzing 50 vendor contracts. The human handles judgment and exceptions: the angry enterprise customer, the architectural decision, the contract clause that doesn't fit standard templates.

This is the pragmatic sweet spot. Pure automation is fragile. Pure human work is slow. The centaur model captures the speed of agents and the judgment of humans.

Real Value Now

Code assistants, data analysis agents, customer support bots, document processing. Proven ROI and user adoption.

Promising but Early

Research agents, sales automation, design copilots. Working demos but reliability gaps remain.

Mostly Hype (For Now)

Fully autonomous workers, self-managing AI teams. Compelling vision but years from reliable production use.

Underrated

Internal tooling agents, testing automation, knowledge management. Quiet wins with enormous productivity impact.

What's Next: The 2026-2027 Trajectory

Based on current trajectories, here's what I expect in the next 12-18 months:

- Computer use becomes reliable. OSWorld-style benchmarks will exceed 70%, making GUI automation viable for production use in controlled environments.

- Multi-agent orchestration matures. Instead of one agent doing everything, we'll see specialized agents (researcher, coder, reviewer, deployer) collaborating on complex tasks.

- Agent marketplaces emerge. Companies will buy and deploy pre-built agents for specific workflows, the way they buy SaaS today.

- Regulation arrives. The EU AI Act's provisions for "high-risk AI systems" will increasingly apply to autonomous agents, especially in finance, healthcare, and HR.

Conclusion

We're in the messy middle of the agent revolution. The technology is genuinely impressive — far beyond what most people expected even 18 months ago. But the gap between "impressive demo" and "reliable production system" is where all the hard engineering lives. The companies that will win are not the ones with the most capable agents, but the ones that solve the trust, reliability, and error-recovery problems that make agents deployable in the real world.

The copilot era was about making humans faster. The agent era is about making workflows autonomous. We're not fully there yet, but we're close enough that every product team should be asking: "Which of our workflows could an agent own?"

References & Further Reading

- OSWorld: Benchmarking Multimodal Agents for Open-Ended Tasks in Real Computer Environments

- Anthropic — Developing Computer Use for Claude

- OpenAI — Computer-Using Agent (CUA)

- Cognition — Devin: The First AI Software Engineer

- McKinsey — The Economic Potential of Generative AI

- Lilian Weng — LLM Powered Autonomous Agents